pytorch - Calculating key and value vector in the Transformer's decoder block - Data Science Stack Exchange

GitHub - sooftware/speech-transformer: Transformer implementation speciaized in speech recognition tasks using Pytorch.

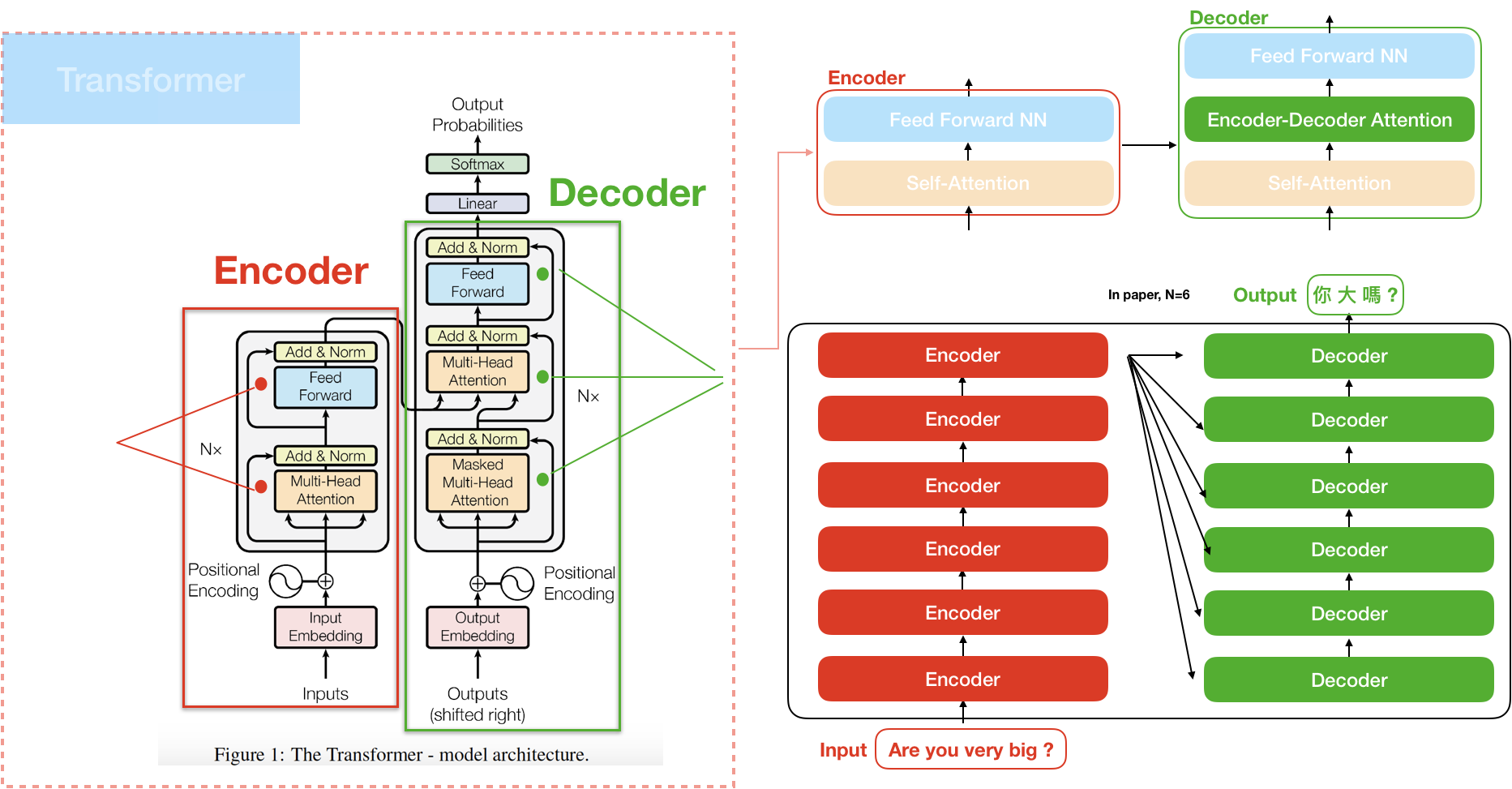

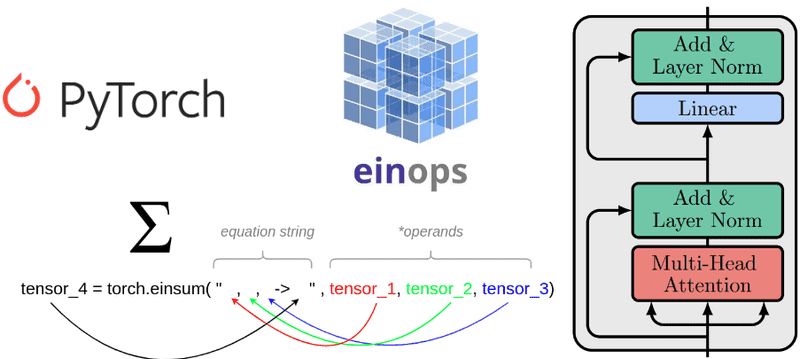

Understanding einsum for Deep learning: implement a transformer with multi-head self-attention from scratch | AI Summer

A Practical Demonstration of Using Vision Transformers in PyTorch: MNIST Handwritten Digit Recognition | by Stan Kriventsov | Towards Data Science

python - pytorch transformer with different dimension of encoder output and decoder memory - Stack Overflow

PipeTransformer: Automated Elastic Pipelining for Distributed Training of Large-scale Models | PyTorch

Transformers for Natural Language Processing: Build innovative deep neural network architectures for NLP with Python, PyTorch, TensorFlow, BERT, RoBERTa, and more: Rothman, Denis: 9781800565791: Amazon.com: Books